Abstract

Reinforcement-learning-driven model scaling in OpenSim

Musculoskeletal simulation depends on accurate subject-specific model scaling, but the scaling step still introduces a major real-to-sim gap in human motion analysis. Manual scaling is time-consuming, subjective, and difficult to reproduce, while optimization-based auto-scaling can be sensitive to initial values and local minima. These limitations can propagate into downstream analyses such as inverse kinematics, inverse dynamics, and joint-torque estimation.

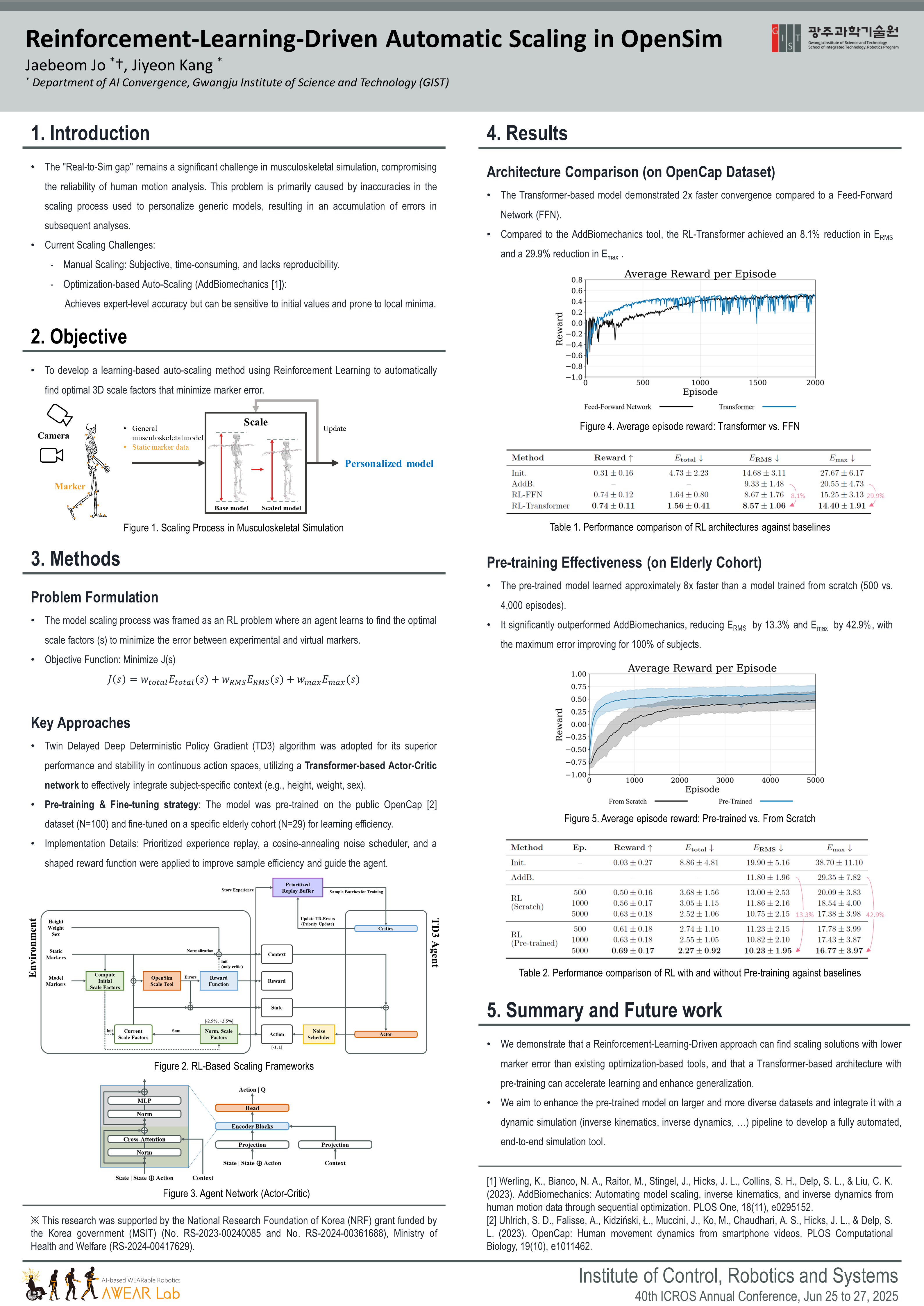

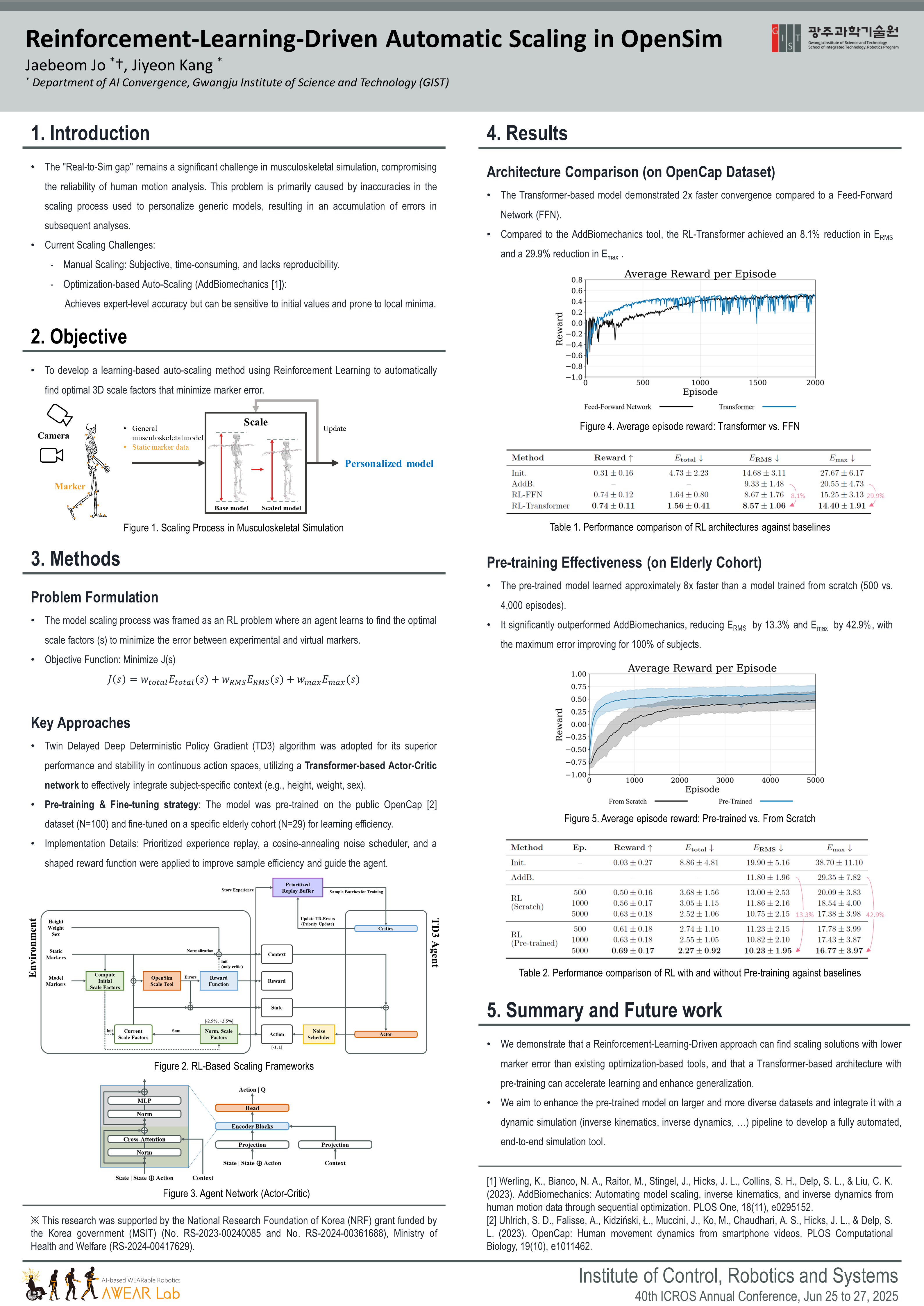

This project formulates OpenSim model scaling as a reinforcement-learning problem. Instead of directly relying on manual tuning or a single optimization run, an agent learns to search for 3D scale factors that minimize marker error between experimental and virtual markers. The framework adopts a TD3-based actor-critic architecture with a Transformer network so that subject-specific context such as height, weight, and sex can be incorporated into the scaling policy.

The Transformer-based RL model achieved faster convergence than a feed-forward architecture, reduced marker errors compared with AddBiomechanics, and accelerated elderly-cohort fine-tuning through OpenCap pre-training.

Poster

ICROS 2025 poster

The conference poster summarizes the project motivation, method, experimental setup, and main results.

Citation

Citation

The manuscript is currently in preparation. For now, cite the conference poster and the related master’s thesis as appropriate.

Conference Poster

@inproceedings{jo2025reinforcement,

title = {Reinforcement-Learning-Driven Automatic Scaling in OpenSim for Enhanced Human Motion Analysis},

author = {Jo, Jaebeom and Kang, Jiyeon},

booktitle = {Proceedings of ICROS 2025},

address = {Jeonju, Korea},

month = jun,

year = {2025},

note = {Poster}

}Master’s Thesis

@mastersthesis{jo2025joint,

title = {Joint Torque-based Sarcopenia Assessment in Activities of Daily Living Using a Data-driven Simulation Framework},

author = {Jo, Jaebeom},

year = {2025}

}